The long-awaited World Wide Developer Conference (WWDC) took place on June 5-9 in San Jose, California. The annual conference organized by Apple is the ultimate event for iOS developers who want to keep up-to-date on the latest OS versions and new features coming up.

At Making Sense we were absorbing cool new ideas and techniques to expand the possibilities of our apps and we’ve been discussing all of the iOS 11 features announced at Apple WWDC 2017. Let’s review them!

iOS 11

One of the most important announcements was the brand new version of Apple’s mobile operating system, iOS 11. Besides the user interface and user experience improvements typically included in each new version, we want to focus on a couple of new features.

One new feature is the way you access apps. Now, apps may be accessed from within iMessage and all of your conversations will be synchronized across your iOS devices. This optimizes storage, since everything is stored in the Apple cloud (apart from your most recent messages).

Another excellent new feature is the possibility to make one-to-one payments using Apple Pay. This is conveniently integrated into Messages as an iMessage app.

Drag and Drop

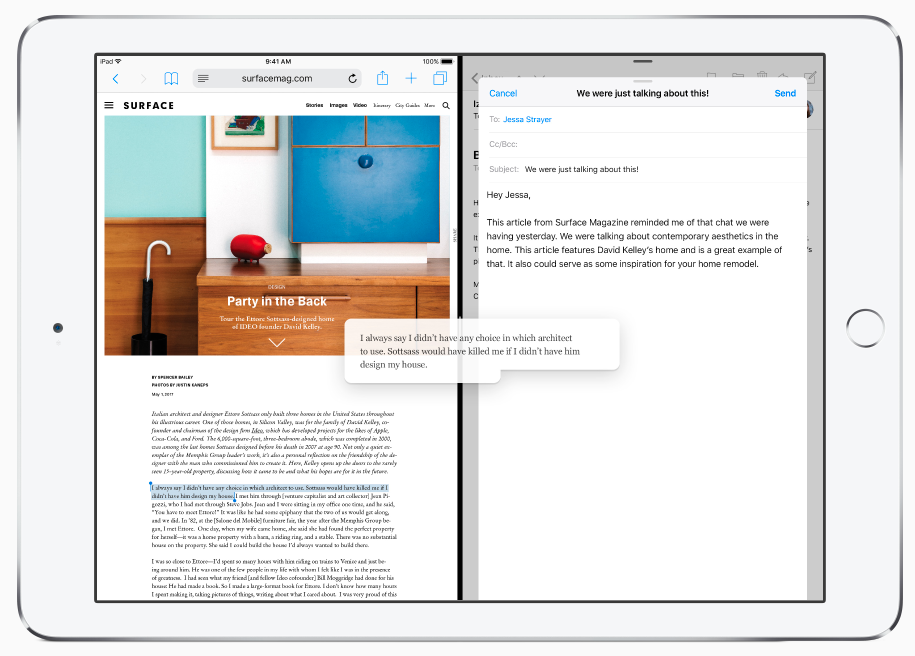

One of the most exciting iOS enhancements was the introduction of a “drag and drop” function into the iPad. You are now able to drag and drop things from one place to another, whether it’s within a single app, or even across separate apps.

As a user, you can also take advantage of other advanced multitouch interactions to continue grabbing additional items to eventually send to the destination app.

For developers, Apple put into action an easy-to-implement approach on both UITableView and UICollectionView to add drag and drop to lists in your app.

It is a great opportunity to provide a real multitasking experience by letting our apps interact with other apps, allowing the sharing of information.

Take a look at Apple’s drag and drop documentation to read more about it.

New SDKs

Now let’s review some interesting Software Development Kits (SDKs) that will be available on iOS 11.

ARKit

Apple introduced ARKit, a framework that provides APIs for integrating augmented reality (AR) into our apps. On iOS, AR can combine the live view from the device camera with objects you programmatically place in the view, depending on the context. That’s why it’s called Augmented Reality: it takes the real world and adds content to it, providing the user an experience where the real world and the content of your app can live together. Let’s see how it works!

We found this feature very useful for making immersive apps. As such, we consider this to be one of the biggest improvements that Apple has made to iOS 11. Imagine how we can now enhance brand loyalty by providing an AR experience. Users will be able to interact with our products from anywhere, as long as they have an iPhone or iPad. For example, an AR experience for the “fidget spinner” should be an easy win for keeping that brand at the top.

But developing an Augmented Reality app without some sort of help can be very difficult. As a developer, you need to figure out where your “virtual” objects should be placed in the “reality” view, how the objects should behave, and how they should appear. This is where Apple comes to the rescue with ARKit to make the developer’s life easier.

ARKit can detect “planes” in the live camera view, essentially flat surfaces where you can programmatically place objects so it appears they’re actually sitting in the real world. At the same time, it uses input from the device’s sensors to ensure these items remain in the correct place.

Check out Apple’s ARKit documentation for more.

Machine Learning

Machine Learning (ML) is a complicated topic. The Core ML framework was released today to help more developers implement this advanced programming technique. Core ML provides an API where given a trained model and some input data, developers will receive predictions about the input data based on the trained model.

Apple provides a good use for Core ML: predicting real estate prices. There’s a lot of historical data on real estate sales: this is the model. Once in the proper format, Core ML can use this historical data to make a prediction of the price of a piece of real estate based on data about the house. It would use data like the number of bedrooms (assuming the model data contains both the ultimate sale price, and the number of windows for a piece of real estate). Apple even supports trained models created with certain supported third-party packages.

We think this is one of the hottest features yet, as Machine Learning is beginning to permeate our lives even if we don’t notice it. As users become more demanding, we need to provide better UX, and Core ML will help developers achieve that. We love it!

Core NFC

Apple has officially opened its Core NFC API for developers in iOS 11. With this development, the iPhone will be able to detect NFC tags and read messages that contain NDEF data. It is a feature that already exists on Android, so congratulations to Apple for giving iPhone users the same capability.

Unfortunately, this feature will only be available on iPhone 7 and iPhone 7 Plus, but it will continue expanding while new iPhone versions become available.

Our opinion on this is that Apple comes a bit late to enter the NFC world with iOS, but better late than never, right? Android has supported this feature for many years, so it was a matter of time for Apple to open its SDK to developers. Anyway, we consider this good news and a feature to take into consideration when designing applications that require peer-to-peer communication through devices.

If you want to learn more about Core NFC, take a look at Apple’s Core NFC documentation.

Xcode 9

Xcode 9 has a brand new Source Editor, entirely written in Swift. Xcode’s editor has always been odd and code completion was notoriously CPU-demanding. With this new version, it should work properly.

Scrolling has also been improved and so has code completion. You are now better able to customize code fonts, line spacing and cursor type.

In the new editor you can use the Fix interface to fix multiple issues at once. Also, when mousing around within your projects, you can hold the Cmd key to visually see how your code is structured and organized.

As an unexpected feature, the new source editor includes an integrated markdown editor, which will help immensely when creating GitHub READMEs-.

We are excited about the improvements in the most popular IDE for iOS, and look forward to experiencing better performance and an improved developer experience.

For more information about the new features of Xcode, check What’s New in Xcode 9 from Apple’s website.

Swift 4

Swift 4 is the latest major release from Apple, although it is currently in beta until Fall 2017. Its main focus is providing source compatibility with Swift 3 code as well as working towards ABI stability.

Swift 4 is included in Xcode 9, so if you have an Apple developer account you are now able to download the beta and test it out. Although the migration process it is not as difficult as it was from Swift 2 to Swift 3, Swift 4 provides some interesting features to keep in mind.

As usual, it may be a bit tedious to migrate from one version to another, but if it is for the sake of the language, we welcome the change.

We recommend taking a look at the updated official Swift documentation to learn more about this new version of this popular programming language.

—

Our mobile development team is very excited about all the new possibilities with iOS 11, Xcode 9 and Swift 4. We think this 2017 version of the WWDC was worth the wait and now it’s time for us to learn all these new technologies. That way, we continue to bring our clients the best possible mobile products in the market.

Take a look at the full event video here!