Disclaimer: If it is your first time reading this in our blog post, you should go some steps back and read the first and the second piece of this amazing series. Where? Just here and here

At this point, we are coming to the end of the AI pipeline. Our AI system has been collecting data, exploring it, and has built a model based on the previous steps.

Now it’s time to test the model before deploying it, and this is about today’s (the last in this series) post.

4. Model evaluation

We’re a bit ahead of ourselves. Back to the scenario where we are building a model. Assuming we can measure and publish some of the research we’ve done…

4.1. Reproducibility crisis

…sometimes we don’t have enough resources to ensure that our experiments are perfectly reproducible. We might have not accounted for all the variables, which means we might be accepting, knowingly or unknowingly, the risk of some bias. We might just be focused on getting our product out the door because that’s what will get us our paycheck.

While this is very similar in concept to the Technological monopoly problem we just explored, there is a slight difference: this is not about the economical impact, but rather the impact to science and knowledge itself. Think of the problems we explored with the Proprietary algorithm: the knowledge is only in the hands of those who built it. So if that’s the case… how are we ever going to reproduce those studies? Will we ever have good sources that explain how feasible these results are?

The answer is, sadly, no.

You might have heard the term “reproducibility crisis” before, outside of the context of AI. It is not a problem specific to AI, but to the whole scientific community. To learn and improve, technology experiments must be run again, and again, and twist and turn their workings until we are sure of the conditions behind our results. Only then, scientific knowledge can be settled.

A great business investment in AI does not have scientific progress high up on the list of priorities.

One final aspect to consider in this reproducibility crisis is that, because most research is driven by… well, funding… and payments are given in direct relation to the publications, researchers are forced to publish. Most of the time, research ends up in boring no-result findings (which is knowledge too!). But a publication titled “No link found between jelly beans and acne” doesn’t bring in the payments (see the explained version of the comic, which covers the ethical problem with forcing results in research).

4.2. False positive and false negatives

One thing that AI experts know and are not really proud to say is that AI is imperfect by definition. AIs are algorithms that learn to approximate a function (the “thing” we want it to learn) as close as possible… but it’s always going to be an approximation. If we knew the exact result for all cases then there would be no need for an AI — we would just code the right answer and have a perfect system working.

This means that an AI algorithm is always going to make mistakes. The whole process of creating these models focuses on reducing those errors, but it’s impossible to get rid of them all.

Because scientists tend to be very creative with naming, they called the first kind of errors (false positives) Type I errors. These are the mistakes that a model makes when it thinks it found what it was looking for, but it was wrong.

Following this creative stroke of genius, false negatives are Type II errors. This is the case where the model predicts that whatever it was looking for is not there, but it actually was.

Spoiler alert: we cannot make both of those measures 0% for a model at the same time.

Which is worse? It depends on the case, and how your model is designed. If you’re looking to detect cancerous cells, you want to ensure there are no false negatives at all. It’s better to mistakenly diagnose cancer, which will lead to more in-depth testing and confirmations, than to mistakenly say patients are safe and send them on their way.

5. Model deployment

5.1. Explainability

After the model is live and working, it starts making predictions and we use its results, probably as part of another system. This system may or may not be a computer system. A OCR feature inside a receipt-capturing application is a model integrated in a computer system. A loan-application system, for which the decisions are always made with a human, is still part of a system, a non-computer system where the output of this model is the input that the human takes.

When decisions are made, it is important that we know why these decisions are made. In the case of a loan-application system, there might be laws that require you as a company to justify the reasons why you deny loans to people. In the case of a cancer-detection imaging system, the reasons are what a doctor can use to decide if the diagnosis makes sense. Situations like these are, literally, life or death decisions.

Some model algorithms are inherently more explainable than others. A few are still seen as “black boxes” — this was the case with Neural Networks until a few years back. This means that sometimes, having a model that works comes at the cost of not knowing exactly why.

The ethical dilemma of this seems pretty obvious when put that way: if we cannot explain exactly why the predictions are what they are, we cannot challenge them or correct them. And comparing them against the ground-truth (this is, what we know for sure) is not going to tell us more, because the bias in the data might be a reflection of the errors in the model. Meaning that if the model is failing successfully, we might never know it.

5.2. Societal Impact

As models start getting used to making decisions and we incorporate them into our daily lives, or into the products that we use, they start to have an impact into the general culture that we live in. Like any new piece of technology that makes it into our society, it can be used or misused.

The benefit of a technology like AI is making difficult tasks (that required “intelligence” of some sort) easier for a computer. Auto-generating music, or recognizing speech (“Alexa, play Despacito”) sounds like the kind of application that benefits us all. But as beneficial tasks get simpler, so do malicious ones.

A common occurrence today in the digital age of media is that we trust things that we can see with our own eyes. While a video could always be faked, acted, or manipulated, it was hard to do so, and hence unusual. Deep fakes are an example of how creating a video of someone that was never there is easier than ever. Not even original video feeds are needed, a single picture is enough.

It’s not just video that can be faked, but content too. As algorithms get more powerful and more available, they can start generating all types of content. From music to fake news. And if you believe this wouldn’t go very far because the creator would be eventually caught, think bigger. Not even giants like Facebook, Google and Twitter can fight these issues, which sometimes take tolls on real people’s lives. You might want to see Destin Sandlin’s (SmarterEveryDay) amazing investigations called Manipulating the Youtube Algorithm, The Twitter Bot Battle (Who is attacking Twitter?) and Who is manipulating Facebook? You’ll be amazed that attackers can get so sophisticated with such simple tools and that they can do so much (sometimes, unfortunately, to the point of actual genocide).

Even if it gives it a bad name, this is all AI-powered. Yet, it doesn’t mean that AI is a tool for evil, but rather that it’s a powerful tool, and we need to deal with the ethical implications of its use.

5.4. Societal preparation

Sometimes tools are just too advanced for us to use – like toddlers who get access to knives – because as a species we’re not quite ready to make good use of them. Insights come in all colors and shapes, and scientific progress is not always right if we cannot handle it correctly. No, I’m not talking about atomic power but that’s a great example of how great things can go awry.

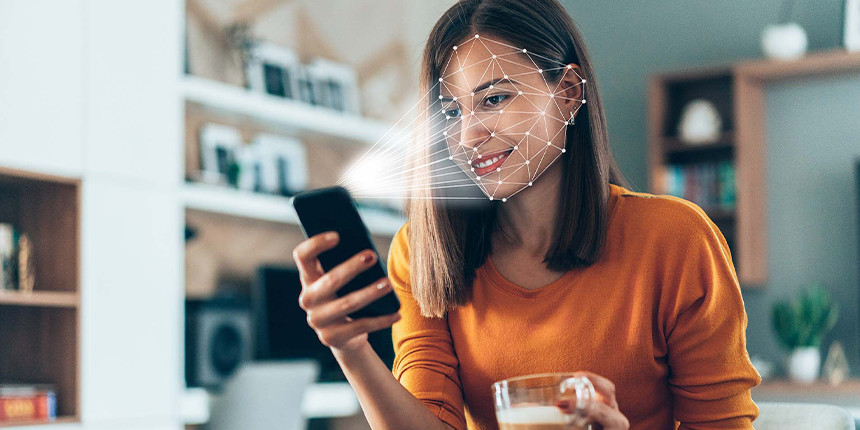

You might remember a study that made the news because it created a model that was able to predict if you were straight or gay based on your photograph. Now, that study has been debunked since then (thanks to the Reproducibility Crisis goddess!), but what if it wasn’t? Would we, mankind, be ready to accept knowledge that you could predict from a photograph, a trait that in some places carries a death sentence? Not to mention that, because of the technical monopolies we’ve discussed before, it would mean that big corporations and governments would be able to apply all of these tools, leaving individuals defenseless.

5.5. Data privacy (take 3)

We’ve already discussed why models are data-hungry when trained. But like any algorithm, they require some input so that the output is meaningful. For instance, a realtor price-estimating algorithm is meaningless unless you provide it with some information about the place you want it to estimate.

So models also need data to work. Which is, at some point, even expected. But some algorithms need constant data to properly work.

An example of this might be the data-tracking built in almost all web pages around the world, used so that advertisers can predict the best placed ad to show users. This is such a serious deal that GDPR laws have forced almost all of the internet to ask whether you wanted to be tracked. You might have been surprised to learn (I was too) that almost all sites have some flavour of these.

But that might be too abstract to grasp. So here’s another example that is closer to home: how does Siri know when we utter the words “hey Siri,” between all the things that we say? Well, “speech recognition” is only part of the answer. The other part is: Siri’s always listening.

Aside from data privacy concerns like Smart TVs and speech recognition, this has opened up a new world of attacks for hackers.

6. Overall

6.1. Regulation

Yet, there are aspects to consider that do not fit anywhere into this pipeline that we’ve imagined, for instance, laws.

So far we’ve seen how AI systems have the possibility of doing a lot of good, and a lot of evil in our world. So, how do we ensure that it is used for the right thing?

Back to the age-old question: if a driverless car hits someone, who is to blame? This question is no longer hypothetical. Regulations should step in and define a responsible party. Laws are not really in place just to finger-point, but also to define someone accountable for making things better.

To make things worse, regulation making is a slow process, while model building gets faster and more powerful by the day (GPT-3 has been replicated with 0.1% the power, and cars can be driven with 19 neurons). It makes sense that regulation is a slow process: politicians consult experts who in turn need to assess and provide them with details of what the state-of-the-art technology is, how it works, what can turn out well and what can turn out badly. While the investigations are made, papers are being written, and sessions are being held in congress, new breakthroughs are being made. How are we going to ensure that we set up fair, up-to-date laws that do not allow for loopholes and evil usage? This is an open-ended question.

6.3. Human baselines

Pragmatism is also an ethical choice. We might not have perfect algorithms, but in some cases, they have already surpassed human capabilities. Cars might not make perfect decisions with even dire consequences, but if they drive better than the average human… shouldn’t we use them? Algorithms for detecting cancer might not be perfect, but if they work better than the average doctor… shouldn’t we use them?

There are voices for and against these choices, some of them focused on the fact that we’re still not ready, some of them claiming that we need to get our regulatory practices straight first. But having a tool that improves our lives and not using it is an ethical decision. So is having a dangerous tool that can make our lives worse and deciding to use it without being prepared.

Moreover, for some of the discussions about ethics in AI, the point isn’t really about AI. “Who will a driverless car kill?” is actually a nonsensical question. Forget about AI: who would you kill? And this is not a hypothetical question. This happens. Every day.

6.4. Jobs

The creation of new technology and tools always brings forth the discussion of what will happen with existing jobs. Sure, new automation tools, especially intelligent tools, will make a lot of jobs disappear.

A superficial look into this tells us that this is not a big issue, because while a set of jobs disappear (like the repetitive factory worker being replaced by a robot), new jobs appear (for instance, the supervisor or trainer for the robot).

But jobs disappearing and jobs appearing are not the same. The same thing happened with industrialization, and a lot of manual and dull work was replaced by machines to give forth a new set of jobs that would control those machines. What happens to the people that do not know how to operate a machine?

“Just press this button and it works” is the type of premise that we see in movies, but in reality, people need to have higher education levels, or specific training to be able to take these new jobs. This works just fine in a society that has everyone educated and prepared for the future. But alas, we don’t live in that perfect society just yet.

When the models themselves become so much better at what they do (see 6.3 Human baselines), how will we correct them? Even now, some of us fail the Turing test, which proves that we are humans to a machine.

Conclusion

Today we explored a systematic approach to identify the ethical issues with AI, but there are other approaches.

This makes sense, since ethics in AI is no small feat to discuss. It covers all aspects that any popular technology touches, from business to scientific discovery, to human safety. There is no clear one-for-all answer that currently covers the questions that we posed today. But knowing what they are is a great first step into making humane, ethical decisions.